AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Redshift unload a table8/7/2023

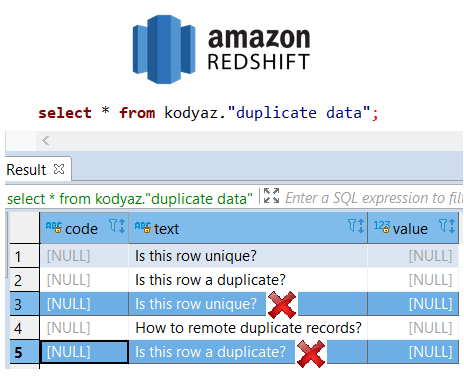

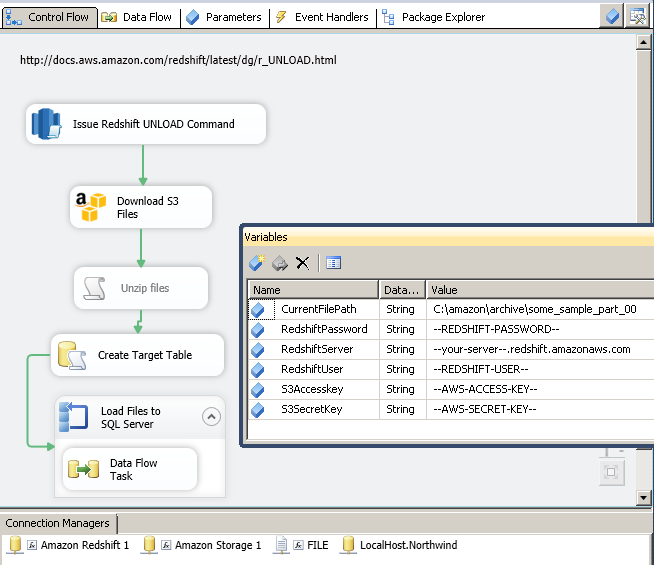

Auto Import Data into Amazon Redshift with Skyvia.Load Data from Amazon S3 to Redshift, Using COPY Command.How to Load CSV File into Amazon Redshift.How to Export and Import CSV Files into Redshift in Different Ways.This article is written for beginners and users of intermediate level and assumes that you have some basic knowledge of AWS and Python. We will see some of the ways of data import into the Redshift cluster from S3 bucket as well as data export from Redshift to an S3 bucket. We have to unload this to a CSV file at a location in the S3 bucket with S3://EducbaBucket/myUnloadFolder/.In this article, we are going to learn about Amazon Redshift and how to work with CSV files. For example, we have a table named “EDUCBA_Articles”. Now let us consider one example where we have a scenario that we have to unload a table present in redshift to a CSV file in the S3 bucket. The use of the unload command, and its purpose can vary depending on the scenario where they have to be used. Given below is the example of RedShift UNLOAD: Whenever there is a difference in the AWS regions of Amazon S3 and Redshift warehouse, we will have to specify the AWS region where the destination S3 bucket of Amazon exists. Region “region of amazon web service”: This parameter helps in specifying the location of the S3 bucket in the Amazon AWS region where the destination of output files is located while unloading.The most commonly used delimiters are a comma (,), tab (t) or a pipeline symbol (|). Character to be delimited: This delimiter helps in the specification of an ASCII character that is to be considered as a separator of fields when written in output files while unloading.This manifest file is written in JSON text format, which includes all the URLs of each output data file copied from a Redshift data warehouse and stored at Amazon S3. MANIFEST : If we specify this parameter, output files containing the data and a detailed list of details of this output data files are created when the process of unload is being performed.Header: Whenever the output file containing the tabular data is generated, if we mention the header parameter, all the column names that act as a header for the tabular data are exported in output along with its data.

While doing this partitions, Amazon redshift follows the same conventions as that of Apache Hive for partition creation and storage of data.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed